Operating context

Biochar

Kiln batches, buyer evidence, carbon receipts.

MRV, feedstock intake, batch evidence, buyer workflows, and carbon-credit reporting.

A senior product team for founders who need the full build: UX, full-stack, AI, evals, guardrails, deployment, and SOC2 readiness.

Sprint $4,999 · Security Assessment $2,499 · Fractional CTO $9,999/mo

Built for

Industries we work with

Biochar kilns, pump dealerships, retail aisles, hardware programs, plant floors, and AI SaaS runtimes all have different truths.

Operating context

Kiln batches, buyer evidence, carbon receipts.

MRV, feedstock intake, batch evidence, buyer workflows, and carbon-credit reporting.

Operating context

Dealer queues and field service made visible.

Dealer operations, service telemetry, warranty intelligence, and field-team dashboards.

Operating context

Demand signals instead of stale reports.

Inventory copilots, CRM automation, demand signals, and store-level operating views.

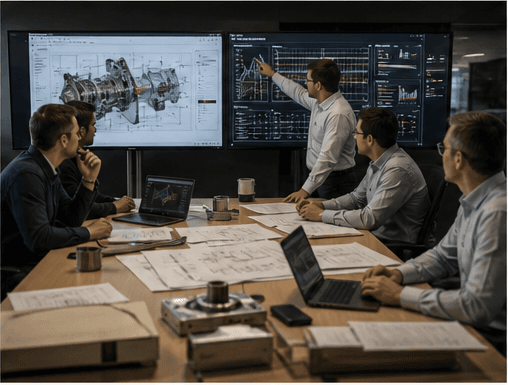

Operating context

BOM changes, routings, and station work made executable.

Forge-style systems for EBOM-to-MBOM handoff, ECO review, supplier impact, routing release, line readiness, traveler signoff, and signed audit trails.

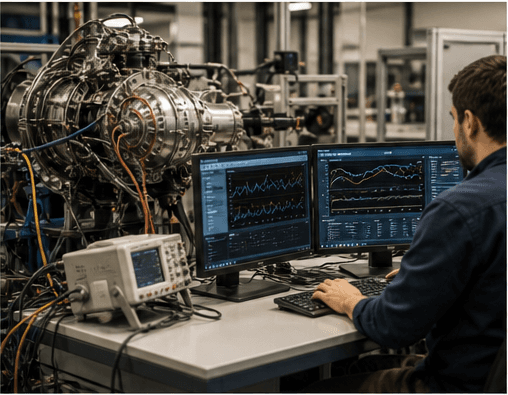

Operating context

Plant-floor exceptions with owners attached.

Ops dashboards, audit trails, maintenance workflows, and manager-facing control planes.

Operating context

Agentic products with runtime truth built in.

Agents, RAG, evals, observability, billing, roles, and production app architecture.

Selected Work

Agentic Agency

In the last 2 months, we turned workflows into reusable skills and packaged them as plugins. That's how we run delivery, content, QA, and outbound as coordinated teams of agents.

Product gallery

Five recent builds are presented below as an interactive board rather than a long scroll rail. Each case opens into a larger mounted view with real screenshot detail.

Active case study

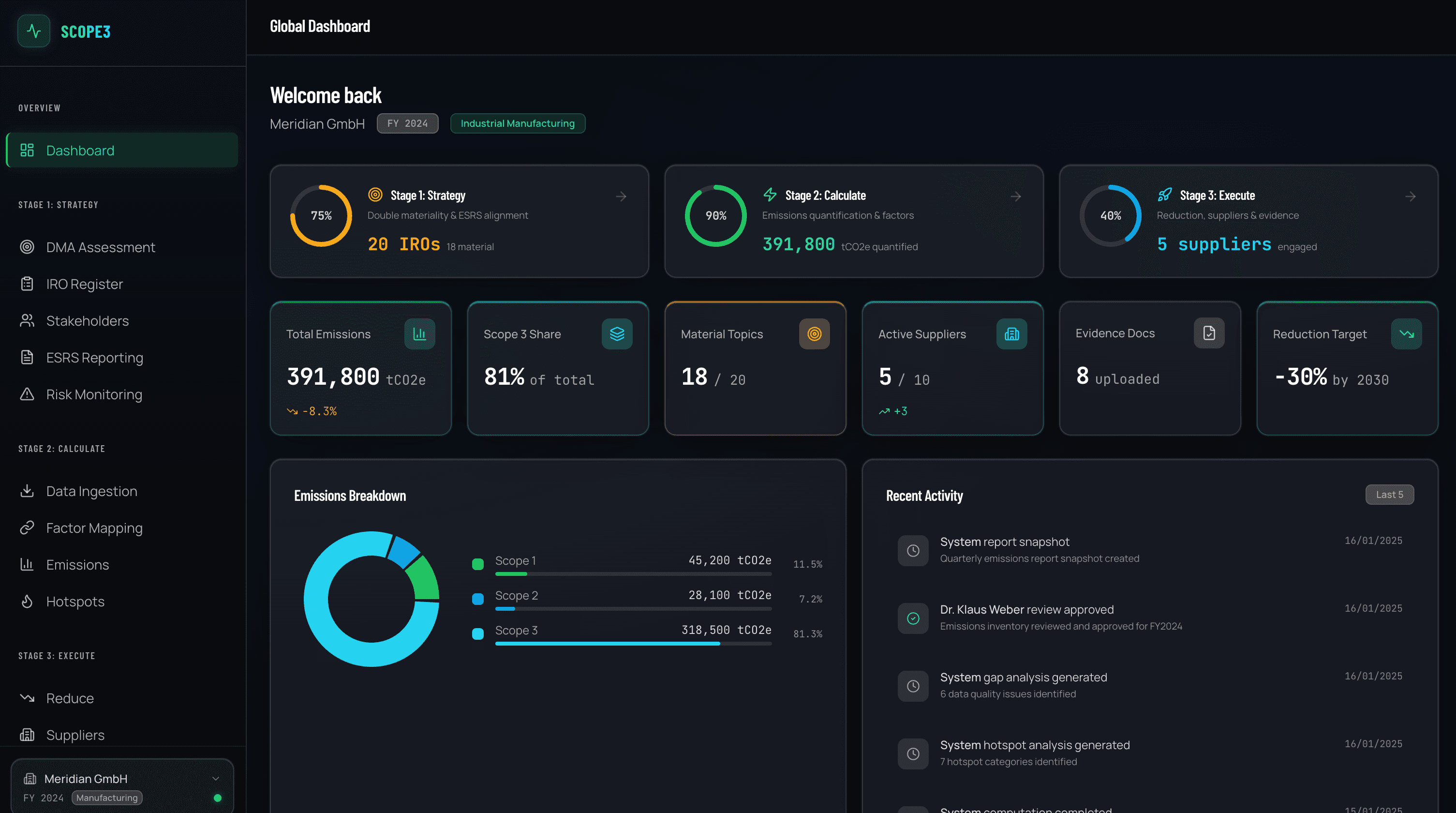

Sustainability SaaS

01 / 05

Emissions Dashboard

Full-stack carbon accounting platform with real-time emissions tracking, supplier management, and ESRS-compliant reporting.

Highlight

Real-time emissions rollups

Highlight

Supplier mix explorer

Highlight

ESRS-ready reporting exports

Browse the five systems

Click or use the arrow keys to move through the active case study.

AI MVP Reality

Demos are easy. Shipping AI users can trust takes guardrails, evals, and latency budgets that hold up outside the lab.

Design for the edge, not the demo.

Budget latency before features.

Treat evals as a live operating system.

Local TTS and VLM quality lag behind cloud, and multilingual coverage stays uneven for privacy-first teams.

The first compromise shows up in quality, coverage, and trust.

Cold starts and multi-hop pipelines blow latency budgets, making real-time experiences feel unstable.

Fast demos become slow products the moment load rises.

Agentic flows loop or break because outputs are not deterministic. Debugging turns into archaeology.

The system looks clever until one branch starts to drift.

Teams want evals that reflect user feedback and business KPIs, not only offline accuracy scores.

Without live signals, teams optimize the wrong thing.

Provider APIs ignore parameters and rate-limit unpredictably. Fallbacks stop being optional.

Reliability depends on contracts you do not control.

Generative UI and content pipelines drift toward hallucinations without tight constraints.

Loose generation erodes consistency faster than teams expect.

We ship MVPs fast without brittle AI. Retrieval, evals, observability, and cost controls are part of the build.

Scope lock

Plan and commercial frame get nailed before delivery expands.

Core ship

Real product logic, integrations, and data model land in week two.

Live handoff

Launch, regression pass, and production-ready transfer happen in week three.

Week sprint

Brief, build, and launch.

The commercial frame, delivery pace, and operating guardrails are set before the build starts stretching.

Timeline

3 weeksfrom discovery to live MVP

Commercials

Fixed pricescope locked before build expands

Guardrails

Built inretrieval, evals, tracing, budgets

Week 1

Frame the product

Week 2

Ship the core loop

Week 3

Harden and launch

Proof-first delivery

Sprint rhythm

Fewer meetings, fewer mystery phases. Each week has a visible job and a visible outcome.

We lock scope, user flow, and system shape before code starts branching.

The MVP turns real: product logic, schema, integrations, and the main user path.

We run the checks, deploy production, and hand over something usable on day one.

We lock scope, user flow, and system shape before code starts branching.

The MVP turns real: product logic, schema, integrations, and the main user path.

We run the checks, deploy production, and hand over something usable on day one.

Built into the sprint

These are not add-ons after launch. They are part of the delivery baseline.

Chunking, re-ranking, and citations keep answers tied to source material.

Golden sets, regression checks, and red-team prompts keep quality measurable.

Schemas, tool contracts, and validation loops keep agent output predictable.

Routing, streaming, caching, and fallbacks keep spend and latency in check.

Prompt and tool traces make debugging and iteration much faster.

State, retries, and idempotent tools keep multi-step runs reliable.

Operations dashboard

Shared memory, live traces, and runtime signals make the system legible while it is running.

Prompts, tools, and memory changes stay visible when runs hop between agents.

Queues, latency, and active jobs are readable while the product is running.

Retries, fallbacks, and budgets are designed into the runtime instead of added later.

Cross-agent context stays attached

Prompts, tools, and jobs in one view

Latency and cost boundaries stay explicit

Structure & Governance

We architect your AI infrastructure using a multi-layered approach. A central orchestrator directs specialized teams operating on top of a unified, compliant data plane.

Specialized Teams

AI Engineering · Growth & Content · Quality Monitoring · Systems Architecture

Orchestrator Factory

Task routing · Shared context · Policy enforcement

Universal Data Plane

Access control · Audit trail · Unified memory

Equilibrium Design

Every production multi-agent system is a game — agents with objectives, constraints, and incentives. We architect the equilibrium so the system converges to the right outcome by construction.

Nash equilibria, Stackelberg hierarchies, and observable payoffs are not academic abstractions. They are the engineering primitives behind agent systems that hold up under pressure.

Change the payoffs. Watch the equilibrium shift.

Both defect — the system converges to mutual loss. Redesign the payoffs.

Switch allocation policies. See who games the system.

Proportional allocation creates inflation incentives. Agents learn to overstate need — the system diverges from truth.

Toggle observability. Watch trust compound or collapse.

Observable payoffs compound trust. Both sides converge to cooperation.

Expand each layer. See who governs whom.

Each layer sets policy before the layers below optimize. The architecture is incentive-compatible by construction.

Services

Fixed scope when the work is bounded. Retainer when leadership matters. Custom scope when the brief needs room.

Retainer

Monthly

$9,999/mo

Funded teams scaling AI products

Sprint

3 weeks

$4,999

Solo founders and early teams shipping v1

Assessment

1 week

$2,499

Pre-Series A teams entering enterprise

Custom

Scoped

Let’s talk

Enterprises and complex builds

Retainer

Senior technical leadership for AI products: architecture, roadmap, hiring, and reliability.

Best for

Funded teams scaling AI products

For teams that need a driver.

Free architecture review on the first call

Sprint

Ship a product-ready MVP with full-stack architecture, AI guardrails, and automated testing.

Best for

Solo founders and early teams shipping v1

Limited slots. Fixed scope, fixed price.

We scope your MVP on a 20-min call

Assessment

VAPT and security architecture review with a prioritized remediation roadmap.

Best for

Pre-Series A teams entering enterprise

Upgrade to full SOC2 certification from $49,999.

Free 15-min security review on the first call

Custom

Multi-role AI workflows, complex integrations, mobile, or enterprise requirements.

Best for

Enterprises and complex builds

We’ll quote after a short call.

Free discovery call — no commitment